The Problem

While building the image processing pipelines at Uplause, I came across sharp which claims to be a “High performance Node.js image processing” package and is typically 4x-5x faster than using the quickest ImageMagick and GraphicsMagick settings. I made some simple AWS Lambda functions that get triggered by S3 PUT objects and after a bit of tweaking had exactly what I wanted (with some pretty decent performance!).

Until… Our QA team tested some HEIC images (any images captured on a recent iDevice) and sharp threw an ugly error:

"stack": [

"Error: source: bad seek to 2181144",

"heif: Unsupported feature: Unsupported codec (4.3000)",

"vips2jpeg: unable to write to target target", ""

]

Turns out, due to the license of Nokia’s HEIF library, sharp requires the use of a globally installed libvips compiled with support for libheif, libde265 and x265. Which means the prebuilt binaries will not include any support for HEIC.

The Solution(s)

- Use

heic-convertwhich usesheic-decodeunder the hood - Grab a pre-build binary of

libvipswith support for HEIF and packagesharpin a zip. Ubuntu 18.04+ provides libheif and Ubuntu 16.04+ provides libde265; both are LGPL. macOS has a formula forlibheif. But if you’re using an ARM CPU like me, you’ll need to run:

npm install SHARP_IGNORE_GLOBAL_LIBVIPS=1 npm install --arch=x64 --platform=linux sharp

Which defeats the purpose of using a global installation. I tried to build and package sharp using a CI but I didn’t have much success with that either. Partly because as of writing this post, lambci does not have Docker images for node:14.x yet.

- Use a Lambda layer with the exact dependencies we need compiled compiled for x86 with support for HEIC.

Writing the Lambda

In a nutshell, here’s how I like to structure my Lambdas that deal with processing images:

import { GetObjectCommand, S3Client } from "@aws-sdk/client-s3";

import { Upload } from "@aws-sdk/lib-storage";

import sharp from "sharp";

import stream from "stream";

const s3 = await new S3Client({

region: "ap-south-1",

});

// Read a stream from S3 into memory

async function readStreamFromS3(Bucket, Key) {

return await s3.send(

new GetObjectCommand({

Bucket,

Key,

})

);

}

// Create a passthrough stream.Transform object and pass it as an input param to S3

function writeStreamToS3(Bucket, Key) {

const pass = new stream.PassThrough();

return {

writeStream: pass,

upload: new Upload({

client: s3,

params: {

Bucket,

Key,

Body: pass,

ContentType: "image/jpeg",

},

}),

};

}

function streamSharpHEIC() {

return sharp().rotate().toFormat("jpeg").jpeg({

quality: 80,

mozjepg: true,

});

}

export async function handler(event, context) {

/* Omitted Regex to parse bucket name and key */

const readStream = await readStreamFromS3(bucket, key);

const convertStream = streamSharpHEIC();

const { writeStream, upload } = writeStreamToS3(

bucket,

`converted/${uuid}.${id}.jpeg`

);

readStream.Body.pipe(readStream).pipe(writeStream);

await upload;

}

Update (2022/06/09): Updated the above snippet to use @aws-sdk-v3

instead of using the entire @aws-sdk (78MB!) package.

I like to prefer the usage of streams because they provide:

- Memory efficiency: you don’t need to load large amounts of data in memory before you are able to process it

- Time efficiency: it takes way less time to start processing data, since you can start processing as soon as you have it, rather than waiting till the whole data payload is available

And since sharp implements a stream.Duplex class, we can pipe the readStream into sharp and finally out into the writeStream.

However, this approach won’t work when your image processing logic relies on the metadata of the image, which requires loading the entire image into a memory Buffer.

En tout cas, this approach is sufficient to convert HEIC/F images to JPEG. Now all we need is our dependencies compiled for x86 with support for HEIC and deployed to AWS Lambda.

Lambda Layers

sharp-heic-lambda-layer solves this exact problem, by providing a lambda layer for anyone to use with their sharp functions. Again, due to licensing concerns regarding the HEVC patent group this isn’t available as a shared lambda layer or an Application in the AWS Serverless Repo. So we’ll still need to do build it ourselves.

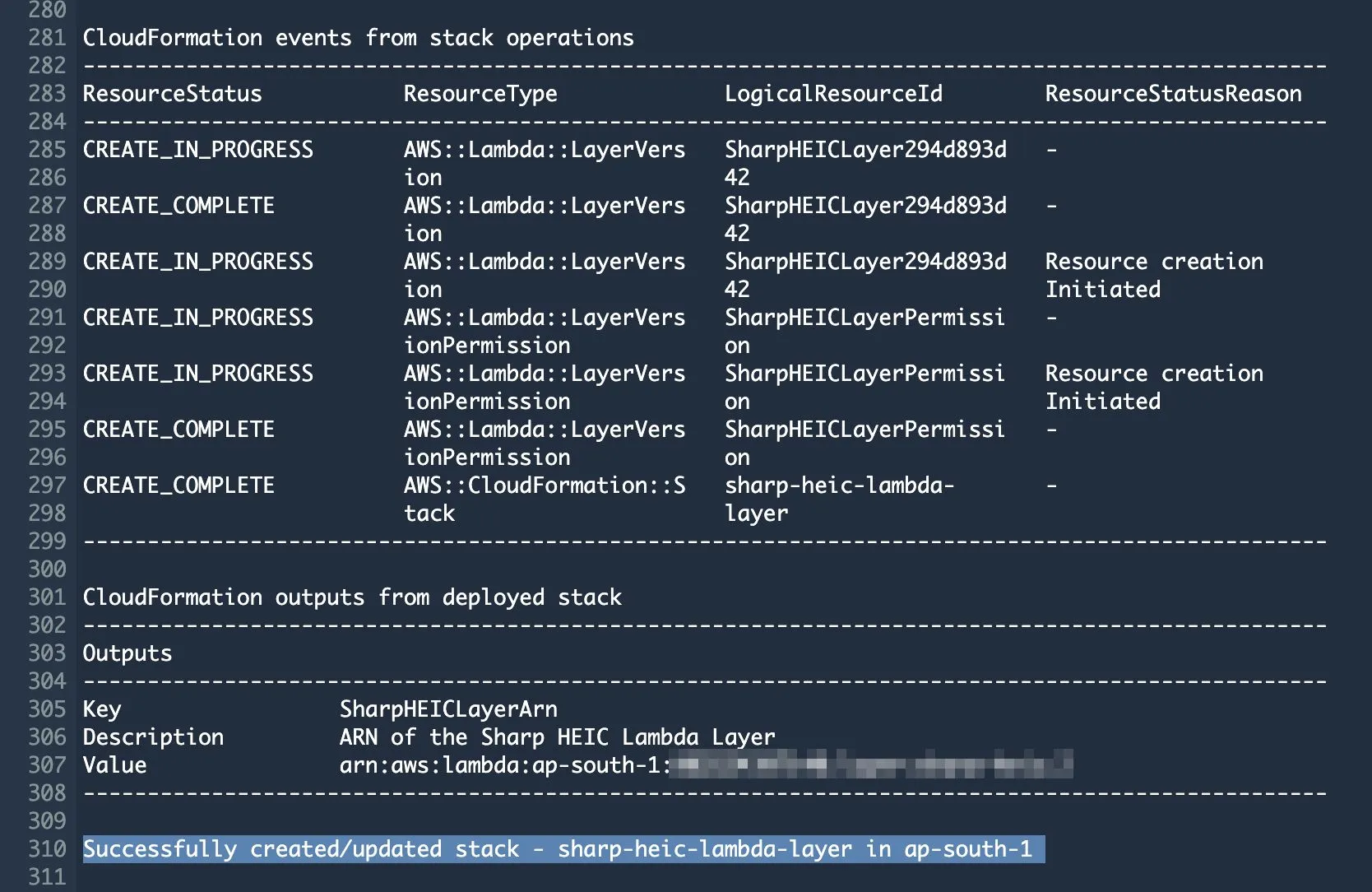

However, the author has been kind enough to provide a buildspec.yaml to be run against AWS CodeBuild, hugely simplifying the process.

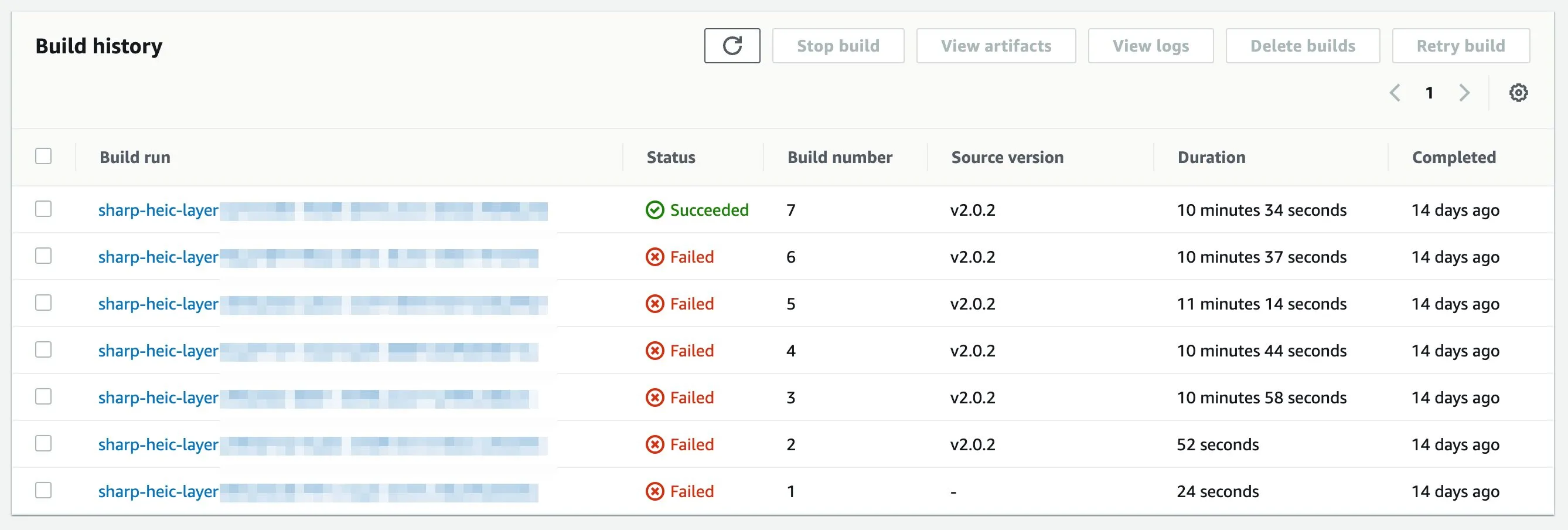

With build times of nearly 10 minutes with each run you can save a lot of time by making sure that your IAM roles have the permissions they need to run CloudFormation, save the build artifacts to S3 and add layers to your Lambdas.

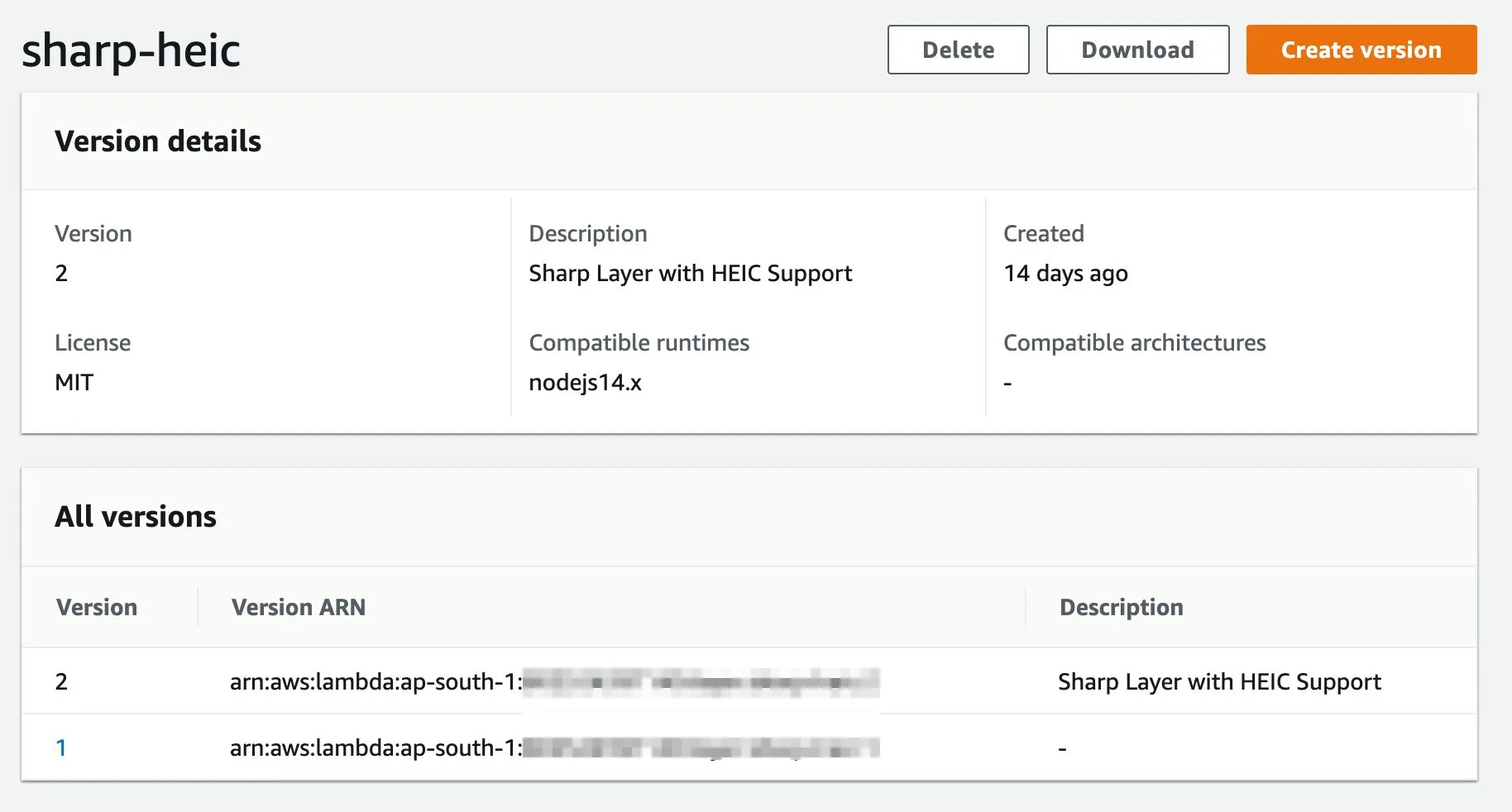

After which it’s a simple matter of adding the generated layers to your functions:

Conclusion

After having successfully added the Lambda layer for sharp I was able to get back to testing the image processing pipelines:

Duration: 4273.53 ms

Billed Duration: 4274 ms

Memory Size: 1536 MB

Max Memory Used: 239 MB

Init Duration: 848.92 ms

Which is about ~4.2s for a 3MB image. I do still believe there’s some scope for improvement here but right now, I’m happy with the result. If I get the time, I’ll look into improving those cold start times or consider purchasing some provisioned concurrency. I’ll certainly have to look for better solutions once we scale.

Links

A few links that helped me figure things out:

- https://serverless-stack.com/examples/how-to-automatically-resize-images-with-serverless.html

- https://github.com/zoellner/sharp-heic-lambda-layer

- https://github.com/lovell/sharp/issues/1105

- https://github.com/libvips/libvips

- https://github.com/nokiatech/heif

- https://baylorrae.com/aws-lambda-heic-and-sharp/